From Stick Figures to Studio Ghibli: What AI Anime Generators are Really Doing These Days

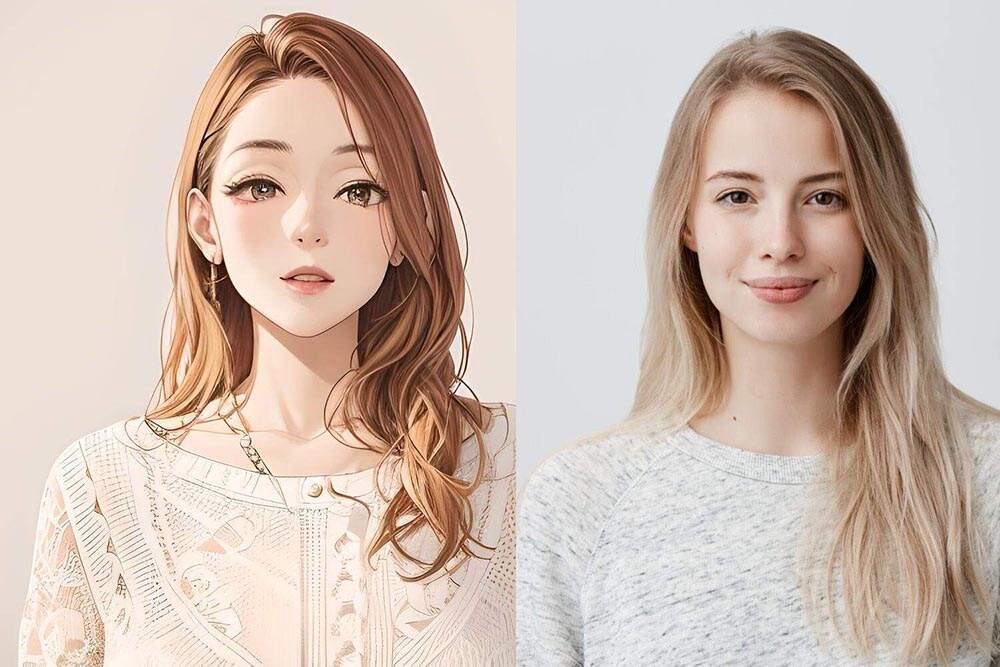

You know that moment when you hentaianime.video/. describe a character which you pictured in your mind for so long, and which an AI just creates. Not exactly right, but close enough that you involuntarily sink the jaw.

This is the magic of AI anime generators, and it is genuinely impressive.

To be real. Most people can't draw to save their lives. During lockdown we tried it, drew pages of potato-shaped heads and shelved that idea. Revenge arc is one of the AI anime tools which nobody thought it would have.

And what is the mechanism that makes these tools tick?

The majority of AI anime generators are trained on deep diffusion models — meaning, the AI is trained by studying thousands of existing anime images until it understands what makes a face look like an anime face, or what makes lighting feel like a Makoto Shinkai film. It is a staggering level of pattern recognition.

Some go even deeper — such as NovelAI or Stable Diffusion specifically tuned for anime. You can get precise with your prompts: You can enter the art style, color palette, or even the expression on the face. Soft pastel, melancholy face, cherry blossoms falling — and away it goes.

Then there are tools, like Adobe Firefly or Midjourney, that are more artistic. They are not so specialized in anime per se, however, and can be induced to provide gorgeous anime-style outputs when guided well.

The prompt is everything. Seriously.

Anime aesthetics cannot be picked up and dropped into the search box. It's the same as giving a chef a single item and asking him to create a tasting menu. The recipients of most of the outputs are now treating prompts as a fine art — elaborate lighting descriptions, indicating specific studios, defining the thickness of the lines.

Full body, soft light, Studio Trigger, white school uniform, golden hour background, very detailed — that's what a real prompt looks like.

There are tight-knit yet passionate communities swapping techniques with the same energy as Pokémon card collectors. They're intense, and honestly, it's inspiring to watch.

What are people actually using this for?

More people than you'd expect. Indie game devs are generating concept art for characters without paying for every individual design. Webcomic creators are testing AI-based panel layouts and making it part of their workflow. It has fans who make pictures that act as a source of reference to the characters in their fanfiction — which is a phenomenon, and they do not take it lightly.

One of the designers with whom I exchanged introductions had been working on the same fantasy novel for six years. She had never found herself at a stand to visualize her protagonist fully. Then one afternoon, using an AI tool, and she finally had a glimpse of her character. It broke two years of writer's block, she said.

That's not trivial. That's real impact.

But it's not all cherry blossoms and sparkly eyes.

The ethics are murky. Most of these models were trained on images scraped from sites like Danbooru or Pixiv — places where artists have been posting images on long before realising that one of their creations will eventually drive a machine.

Plenty of artists are upset. And so, right or wrong, as you see it. Some have even begun to use AI as a creative springboard when they handle the final details themselves. The responses vary widely.

Then there's the quality ceiling issue. Hands. Toes. Intricate backgrounds. The AI still fumbles these sometimes — in a subtle manner or completely out of its hinges. The 6 finger hand that is a hand, should be an elegant one, is not the impression you were making.

Where does it all go from here?

Rapidly. Honestly. Character consistency — the reproduction of the same character in a sequence of pictures — has been very much superior this year. These tools as Fooocus and Kohya training on the LoRA allow for fine-tuned character styling and maintain it across scenes.

Video is the frontier. Already, the generation AI anime video clips circulate. Rough around the edges, sure. A year ago they were barely moving slideshows. Now? This is because sometimes you may find yourself believing that it is a small studio that has produced a small clip.